Once the number of items read equals the commit interval, the entire chunk is written out via the ItemWriter, and then the transaction is committed. One item is read from an ItemReader, handed to an ItemProcessor, and transformed into an output object. The data items can be written to database, memory or outputstream. ItemWriter - An Output operation, Used to write the data transformed by ItemProcessor. It processes input object and transforms to output object.ģ. ItemProcessor - A processing operation, Used for item transformation. It reads the data that will be processed.Ģ. ItemReader - An input operation, Used for providing the data.

#Spring batch read from file and write to database from form how to

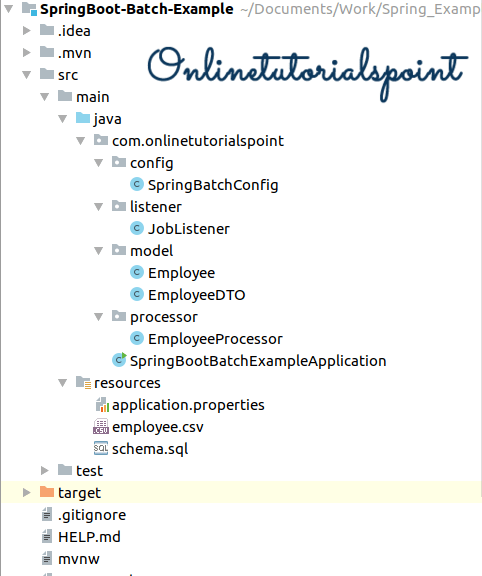

In this example, I will explain how to configure batch jobs with Chunk Oriented Processing Model using spring boot.Ĭhunk Oriented Processing Model involves three componentsġ. TaskletStep is used when either only reading or writing the data item is required.Ĭhunk is used when both reading and writing the data item is required.

Spring Batch Framework offers two processing styles. Read data from a XML file (JAXB2), process with ItemProcessor and write it into a CSV file. Spring Batch provides three key interfaces to help perform bulk reading and writing: ItemReader, ItemProcessor and ItemWriter. Read data from a XML file (XStream) and write it into a nosql database MongoDB, also unit test the batch job. All batch processing can be described in its most simple form as reading in large amounts of data, performing some type of calculation or transformation, and writing the result out. Read data from a CSV file and write it into a MySQL database, job meta is stored in the database. This helps developers focus on writing only business logic and hence saves our time and effort. ItemReader, ItemProcessor, ItemWriter Few examples to show the use of Spring batch classes to read/write resources (csv, xml and database). Spring Boot provides a lot of utility classes to perform batch jobs and schedule them at regular intervals.